Quality assurance (QA) is critical to any thriving call center. Without it, you have no way of knowing how agents are truly performing, and your customers won’t receive top-notch service.

A proper contact center QA program helps call centers retain happy customers, improve brand and voice consistency, and keep agents in check.

This blog uncovers the value of a strategic quality assurance program or enhancing your call center performance and increasing customer loyalty.

Defining Quality Assurance – A Quick Refresher

So, what exactly is call center quality assurance? Simply put, it is a process or set of strategies designed to help align your organization’s goals with how your agents interact with customers.

For example, if it’s essential that most of your customer calls are resolved in a set amount of time, a good quality assurance system can be implemented to help track this to see how your agents are doing against a given benchmark. Based on the data received from the QA program, you’ll be able to coach and train agents to work towards this business goal and others important to your call center.

Call centers use QA plans to monitor things like:

- Key performance indicators (KPIs) like average call handle time (AHT) and conversion rate

- If agents are saying required compliance and regulatory statements

- Conversational win markers and responses to customers

Why Quality Assurance is Mission Critical to Your Call Center

So, why is call center quality assurance necessary? Both call centers and customers benefit dramatically from having a QA program. QA provides managers and supervisors with the data and insights they need to track if agents hit all of their call center script marks during customer interactions. With this knowledge from call center quality monitoring scorecards, call center quality assurance programs can improve the service they offer clients. This leads to improved customer experience and, ultimately, improved satisfaction.

QA and customer satisfaction are intrinsically linked

Needless to say, customer satisfaction is crucial to the overall success of contact centers. One Zendesk study polled over 1,000 US customers and found that 58% of the respondents stopped buying from the company after receiving lousy customer service.

Conversely, 54% of the survey respondents who interacted with good customer service agents used or purchased more services or products from the company, and 67% recommended the company to others.

Keeping customers is extremely important due to the cost of acquiring new ones. According to a study by Harvard Business Review, the cost of acquiring a new customer ranges between 5 and 25 times the cost of retaining the existing one. Your investment in QA will pay back dividends with loyal and satisfied customers.

That’s why it’s so important to have a call center QA framework in place.

Creating a call center QA Framework

A Call Center QA (Quality Assurance) Framework is a foundational step in evaluating and improving your service quality.

To create a QA framework, you need to:

Identify Vital Metrics and KPIs

Instead of generating tons of metrics for your team, focus your efforts on the metrics and KPIs that have the biggest impact on your business needs.

For instance, if your call center receives large volumes of customer queries on a regular basis, it might be more important to track the Average Handling Time (AHT) than other metrics.

Efficiently Track and Analyze Your Key Metrics

Instead of relying on legacy monitoring methods like manual recording, paid surveys, and mystery shoppers, you can utilize advanced AI-enabled solutions to automate QA monitoring, data collection, and analytics.

Develop and Deploy Continuous Improvement Strategies

Make the most out of your collected data and analytics in optimizing customer interactions and agent performance. A low First Contact Resolution (FCR) rate may indicate that your agents lack the knowledge and soft skills needed to address customer queries, meaning that you need to invest in more tailored training and development efforts.

Types of QA Frameworks for Call Centers

There are three different types of QA frameworks that you can utilize to optimize your call center’s quality management: operational framework, tactical framework, and strategic framework.

Operational QA Framework

The operational framework tackles QA from 3 primary aspects that emphasize short-term improvements: daily performance metrics, identifying weaker performers, and ensuring quotas are met.

A key to implementing the operational QA framework is to monitor and evaluate your call center’s daily goals aggressively.

Tactical QA Framework

Unlike the operational framework, the tactical QA framework promises to deliver a longer-term impact by addressing the root cause of recurring service quality issues, such as unoptimized workflows and insufficient agent knowledge and training.

Agent training and restructuring are among the possible solutions that a tactical framework can introduce to your contact center. However, the primary objective of the tactical framework is not short-term gains.

Strategic QA Framework

The strategic QA framework goes many steps further than the tactical framework. It sets the roadmap for your contact center’s activities and how these activities align with the company’s overarching business goals.

A key metric in the strategic QA framework is the Net Promoter Score (NPS), which reflects customer loyalty and the likelihood of recommending the business. It explores how customer service contributes to business objectives, ways to enhance customer loyalty and brand reputation, and how to define and reward employee success.

Difference Between Quality Control (QC) and Quality Assurance (QA)

While both Quality Control (QC) and Quality Assurance (QA) aim to maintain and improve customer service, they emphasize different areas of service quality.

QC, in the context of contact centers, typically involves monitoring and evaluating the performance of individual agents. It utilizes recording and reviewing calls to monitor and analyze agent performance, both when it comes to soft skills and technical knowledge, as well as script adherence and compliance.

QC is a reactive process, meaning it usually kicks in after the interaction has occurred to identify problems and generate solutions for them. The purpose behind QC is to benchmark your agent’s performance and call center practices against the set standards and take corrective action.

QA, on the other hand, focuses on the entire customer service delivery process, emphasizing a more holistic approach to quality than QC. It involves developing and implementing systems and procedures to ensure that every customer interaction meets a certain standard of quality.

Unlike QC, QA is proactive, focusing on preventing problems from becoming habits by putting strict standards and guidelines into place. Some of the solutions that QA may bring to the table are SOPs (Standard Operating Procedures), policies, tailored coaching programs, and metrics setting.

Call Center Quality Assurance Program Best Practices: Essential Actions to Increase Customer Satisfaction

While most call center professionals have a solid grasp of the fundamentals, there is debate about best practices among call center quality management specialists. With that in mind, here are some general call center calibration best practices to help ensure your organization gets the most out of its QA program.

Provide Clear Goals

Ensure your QA guidelines and scorecards are clear to your agents so they know what priorities the call center is working towards. It’s vital your agents know precisely what is expected of them during each customer interaction so they keep goals top of mind.

When you start drafting your quality assurance program, it’s important that you gather stakeholders and lay the foundation for the program’s objectives. Defining and monitoring the relevant KPIs, such as AHT or customer churn rate, is key here.

Share Insights Across the Organization

Managers and supervisors gain access to tons of vital data and insights through call center quality assurance scorecards and customer surveys. However, this critical information should be shared across the call center so all team members can learn from it and improve. Equip your agents with details about emerging trends, how to handle challenging questions, call metrics, and more for continual improvement and quality assurance calibration.

Share Insights Across the Organization

Managers and supervisors gain access to tons of vital data and insights through call center quality assurance scorecards and customer surveys. However, this critical information should be shared across the call center. This ensures all team members can learn from it and improve. Equip your agents with details about emerging trends, how to handle challenging questions, call metrics, and more for continual improvement and quality assurance calibration.

QA Everyone

Instead of random sampling across your employees, ensure you analyze each agent’s QA scores and that each agent is held to the same standard. By doing this, your QA team will gain insights from your top performers and tenured agents to your newest hires. With this full spectrum of data, you can share what’s working well and what isn’t (see previous point).

Give Regular and Consistent Feedback

Use call center quality assurance solutions training or calibration sessions on a regular and recurring basis. This empowers your agents to provide better customer experience and conversations based on customer feedback. A standard evaluation form, a calibration session, and QA software help to avoid mixed messaging. Be sure to highlight where they are performing well and provide constructive feedback on areas they can improve.

Assign Quality Assurance Ownership

It’s vital that you have a point person or persons assigned with complete ownership of your contact center quality management process. Ensure roles are clearly defined with who is in charge of planning, implementing, and measuring your contact center quality assurance. Without this, your organization may not get the full benefits out of the QA program.

Use a Relevant Sample Size for QA

Traditionally, most call centers relied on random sampling to measure QA. However, today, with rising customer expectations regarding service levels, this method has become obsolete.

A more effective approach is to use a large sample size that provides accurate analytics and reports. However, since large contact centers typically handle a large amount of customer interactions per day, you might need to focus your sampling. For instance, you can collect your sample from complex interactions or interactions handled by new hires and get focused insights into each area to identify improvement opportunities.

Advanced contact center intelligence solutions are even capable of automatically scoring all calls, not just a few random samples. This ensures that the insights you get from these tools are accurate and relevant.

Combine Automated and Manual Data Collection Practices

Utilizing both automated and manual data collection activities is important for you to get a holistic view of your call center’s performance and improve quality assurance.

Manual data collection can be time-consuming, but it’s still an important part of the QA process. For instance, managers can use call monitoring to listen in on agent conversations and intervene when needed. AI-powered software solutions can streamline this process by setting automatic alerts that are triggered only when the agent goes off script or is unable to effectively resolve the customer’s problem. Other forms of manual data collection include polls and surveys. Not to mention,

Manual data collection can produce skewed results, so you shouldn’t fully rely on it. Automated data collection enables fast and accurate insights that your entire customer service team can put into action in real time. With automated call center data analytics tools, you can track qualitative metrics like service levels and call abandonment rates.

Avoid Aggressive Evaluation or Agent Schedules

To make the most out of your QA program, you need to schedule evaluations consistently to collect relevant and actionable data. Continuous evaluations also help your team stay on track with regular, personalized feedback mechanisms. However, tightly scheduled evaluations may produce inaccurate data.

For smaller contact centers, scheduling semi-annual or annual evaluations may be enough to get a comprehensive overview of your call center’s performance.

On the flip side, larger call centers that handle huge amounts of calls each day may opt for monthly or even weekly evaluations to continuously capture meaningful insights and put them into action quickly.

Further, it’s crucial that you set realistic schedules for your agents to avoid burnout. For instance, if an agent’s break is always outside of peak hours, the agent will find themselves drowning in work, causing them to underperform. This can also increase the turnover rate in your contact center due to poor employee satisfaction rates.

Ten Ways to Improve Call Center Quality Assurance

Even with an understanding of best practices, there’s always room for improvement regarding the call center QA process. Here are some tips to improve call center quality assurance.

1. Track Your Team’s Performance

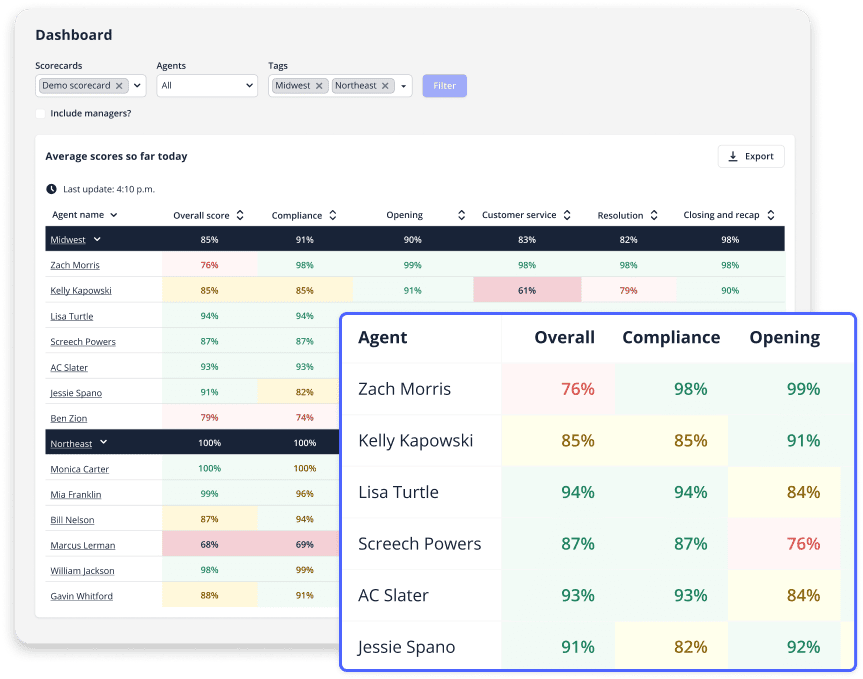

Track the performance of all your team members using a call center agent performance scorecard. By doing so, personalized call center coaching guides can be created for underperformers and top performers to highlight their strengths and weaknesses to ensure call center script quality control.

2. Provide Regular Training and Coaching Sessions

Your QA data should be the backbone to your training program and coaching sessions for your agents. As a part of your call calibration process, have your top QA specialist share insights to drive behavior change. If possible, schedule call calibration sessions close to call completion, so its top of mind for agents.

3. Evaluate Continuously

Instead of random samplings at select times, where you may easily miss the bigger picture, have your contact center QA team analyze call data throughout the month with a call center quality assurance evaluation sheet. This will give you the most accurate data regarding your contact center’s performance.

Even if a well-performing agent is doing a great job most of the time, there’s no guarantee that their performance will remain consistent. Factors like agent burnout and changing customer expectations may lead to your agents performing at a lower level than what they’re used to.

It’s also a good idea to enable your agents to score their own calls. Sometimes, your agents may not immediately notice that there’s a problem with how they interact with customers until they listen to and assess their own calls. This can drastically boost agent engagement, especially if you present the data in a centralized dashboard that your agents can independently access.

Further, you should allow your agents to dispute assessments. By receiving feedback from your agents on how calls are assessed and scored, you may be able to spot gaps and inconsistencies in your QA process.

It’s also important to create an action plan for your calibration process to improve quality assurance scores through continuous improvement and agent engagement. Since different evaluators may score calls based on varying criteria, calibration sessions will help you ensure that all evaluators are on the same page. This can be as simple as letting your evaluators listen to one call per week and see how each of them will score it. Setting guidelines for the calibration process can also be useful here.

4. Integrate Quality Assurance Insights into Your Strategy

Traditionally, quality assurance practices were viewed as isolated objectives, where you needed to tackle each problem individually. However, modern evidence suggests that QA should be implemented from a strategic perspective to realize its full benefits. In other words, every single piece of data can have a ripple effect over your entire organization.

For example, if a particular agent is having issues handling customer queries related to a particular problem, coaching that agent will help them improve their performance and learn how to tackle such queries more effectively. But what if you view this as an opportunity to improve your coaching sessions for your entire team? If one agent is having certain difficulties related to their job, there’s a good chance that other agents may encounter similar problems.

This is how you can implement QA as a strategy, not a box-ticking exercise. It’s also a good idea to share your insights and best practices with the entire organization, including your sales, product, and marketing teams. For example, if your customer service data-based insights imply that your product isn’t meeting your customers’ expectations, your product team can use this intel to improve the product.

5. Involve Your Agents When Creating Your QA Checklists

Instead of just having the managerial team come up with the quality assurance checklists, be sure to also solicit the feedback of your call center agents. After all, they are the primary boots on the ground when it comes to customer interactions. This will allow you to tap into their insight. It will also give your agents ownership of the QA process and contact center quality management.

6. Capture Customer Sentiment

Understanding customer intent is crucial for quality assurance. If you don’t know what your customers feel about your brand and service level, you won’t be able to meet their expectations, resulting in higher churn rates and more friction in their buyer’s journey.

AI-powered conversation intelligence solutions can automatically capture and track customer sentiment with natural language processing capabilities. By getting a deep dive into customers’ emotions and pain points, you can better drive your quality assurance improvement efforts forward.

7. Use Gamification

Use challenges, leaderboards, and other games to tap into the competitive spirit and reward call center agents. With gamification, you’ll encourage agents to hit all their marks during customer inquiries and everyone will have transparency in the QA process.

8. Understand the Bigger Picture

One of the key pieces of the QA puzzle is to understand the bigger picture in today’s omnichannel business landscape. Realizing that your contact center is just one of many customer touchpoints that include social media, email, retail/online stores, and even third-party review sites is vital for improving customer experience and quality assurance.

Advanced call center tracking solutions can monitor and analyze customer interactions on all fronts, ensuring that you get accurate insights that give you a more holistic view of customer satisfaction and drive better decision-making.

9. Optimize Your Call Monitoring Strategy

Enhance the efficiency of your monitoring process by developing a detailed and methodical plan that enables your leaders to collect large amounts of data. They can use this data to improve both call handling and quality, as well as simplify monitoring, tracking, and validating the Quality Assurance (QA) process for its effectiveness.

Here, it’s essential to ensure that your monitoring methods match your company’s needs. A good practice is to avoid relying exclusively on standard monitoring procedures. Instead, try to diversify your data collection techniques, while focusing on both quantitative and qualitative data analysis.

Throughout the process, you’ll need to experiment a bit with different methods and combinations until you settle on what works best for your contact center. Further, you should encourage agents to evaluate their own performance with self-analysis scorecards.

10. Have Suitable QA Tracking Tool

To get the most out of your call center quality scorecards and QA scores and make them actionable sooner, you’ll want to have suitable customer support quality assurance tools that allow you to track things in real-time. Check out the next section below to learn how contact center software can automate and modernize your quality assurance tracking.

Should I Use Real-Time Tools for Call Center Quality Assurance?

Many call centers still rely on a manual quality assurance process instead of using automated quality monitoring. While this isn’t a bad thing, they may be missing out on some great addition to their customer service strategy.

Let’s say a contact center QA team scores two to eight calls or a customer interaction per month, per agent. That’s fairly typical for contact centers today. That’s only a fraction of the total calls a call center handles. They could be missing interactions negatively impacting key performance indicators.

Rather than relying on small, random samples of calls to gauge agent performance, QA can be done in real-time and give 100% visibility into all calls. With an automated, real-time solution, QA specialists can focus on calls that have already been reviewed and scored.

Additionally, a real-time call center QA solution is less subjective than manual processes. Each process requires set criteria to score calls. But while a real-time solution uses AI to score calls automatically with the same logic for every interaction, manual processes require QA specialists to subjectively score each call. An automated call center QA framework removes nearly all subjectivity, saving time for contact center managers.

Integrating Technology into Your QA Workflow

With the right tech, you can significantly improve your QA workflows and techniques. Some of the trending tech solutions and tools in the call center industry include:

- Automatic call recording: Automatically record and transcribe 100% of calls.

- Speech analytics: AI conversation intelligence automatically analyzes calls and searches for sentiment, keywords, and script adherence.

- Omnichannel customer support: Deploy solutions that integrate all of your support channels into a single platform, facilitating customer sentiment and behavior monitoring and analysis.

- Automated metrics tracking: Performance tracking solutions monitor your vital metrics automatically to streamline your QA process.

Challenges in Call Center Quality Assurance

In a contact center environment, maintaining and improving quality assurance comes with numerous potential challenges. These include:

Overabundance of Data

One common challenge in call center quality assurance is dealing with too much data. When there’s an overwhelming amount of information to sift through, it can slow down the QA process and potentially lead to incorrect assessments.

For example, if a call center collects vast amounts of call recordings, transcripts, customer feedback, and performance metrics without a clear strategy for organizing and analyzing this data, it can become a hindrance rather than a help.

Improper Data Interpretation

Alongside the overabundance of data, improper interpretation can lead to misguided judgments.

Without the right context or understanding of the metrics being analyzed, supervisors may draw incorrect conclusions about agent performance or customer satisfaction levels.

For instance, if a call center focuses solely on average call duration as a metric for agent performance without considering factors like issue resolution or customer satisfaction, they might mistakenly penalize agents who spend more time resolving complex issues effectively.

High Turnover Rates

Continuous hiring and training of new agents can make the job of your QA team more challenging as they must ensure that new agents adhere to policies, security, quality, and more.

Ensuring all agents gather and deliver 100% accurate information to consumers is a top priority for any QA manager and something they must take incredibly seriously. Inconsistency or inaccuracy can put your company at risk as, in many cases, the information agents deliver is a legally binding disclosure that needs to be read accurately 100% of the time.

AI solutions that provide pre-recorded disclosures and automated statement delivery that’s integrated with each call can make onboarding processes much easier. They guarantee proper delivery of information and legally binding statements on every call and enable agents to use a simple tool to follow complex conversational processes.

Further, these solutions can cut down on training time, saving call centers money and eliminating human error, which reduces the risk of legal liabilities, fines, defects, and customer dissatisfaction.

Time-consuming QA activities

Quality assurance procedures such as interaction monitoring, reviewing call recordings, and analyzing customer feedback can be incredibly time-consuming. This can strain resources and delay feedback loops, impacting the efficiency of the call center. For example, if supervisors spend excessive time listening to call recordings without leveraging technology for transcription or automated analysis, it can significantly slow down the QA process and limit the number of calls they can evaluate.

To eliminate wasteful activities, you can leverage automation and integration in your QA processes. Speech analytics software solutions conduct automatic transcription, analysis, and scoring based on predefined rules and keywords.

Additionally, you can deploy automated customer feedback surveys to measure customer satisfaction after each call.

Visualization of vital QA metrics and performance insights is also vital here, which can be done via real-time dashboards and reports enabled by AI/ML algorithms.

You can also utilize Application Programming Interfaces (APIs) to sync your QA tools’ data with your core systems.

Resistance From Agents

Introducing new QA solutions or methodologies can face resistance from agents, particularly if they perceive the changes as a threat to their autonomy or job security.

For instance, if a call center implements a new performance scoring system that agents feel is unfair or inaccurate, they may resist adopting it and may even become demotivated or disengaged, impacting overall performance.

Here, it’s essential to clarify the goals of implementing such solutions as a means of empowering, not replacing, your agents.

Privacy Concerns

Deploying advanced QA solutions that involve collecting, storing, and analyzing call data and recordings can raise privacy concerns for both agents and customers.

Agents may worry about their conversations being monitored, while customers may have concerns about their personal information being recorded and stored.

For example, if a call center implements speech analytics software to analyze customer interactions for quality purposes, it must ensure compliance with data protection regulations such as GDPR or HIPAA to address these privacy concerns effectively.

Modernizing Quality Assurance with Balto Software

If your current QA program is a painfully manual process, it doesn’t have to be. Balto’s state-of-the-art quality assurance software makes it easy and provides immediate impact.

With Balto’s call quality monitoring solution, call centers have real-time insights into all calls as they happen instead of collecting and reviewing data from random samples days or weeks later, allowing you to make positive changes faster.

Real-Time QA automatically scores 100% of calls, so you can spend time improving calls — not scoring them. Check out this case study to see how a leading flooring company went from only monitoring about 1% of calls to monitoring all calls within six months of working with us.

Get Real-Time Quality Assurance Analytics for Your Organization

Some people think simple and effective call center QA software sounds too good to be true. It’s not. Get a free demo and see the benefits for yourself. We’re sure you’ll see how beneficial real-time QA analytics can be for your contact center and customer satisfaction.

Stop Mistakes Before They Become Habits

Put QA on offense and get immediate insights into 100% of your calls with lightning-fast installation and onboarding.